Higher Development Pace Does Not Simplify the Path to Product Success

As we witness AI's improving coding capabilities, we paint a rosy picture of product development. In reality, higher development pace is not necessarily advantageous.

There’s a bit of news circulating in different contexts: AI already writes itself. Most typically followed with a heartfelt “Yippie!”

In the essay that went viral, Matt Shumer painted quite a typical “AI is there to take your jobs” picture. Yet, the bit that few people picked on was about GPT developing itself.

Dario Amodei made a similar claim about Claude. Actually, he does that quite routinely. And when you listen to Boris Cherny—the creator of Claude Code—he explains how they get Claude to write its own code.

We could consider what these claims actually mean. I mean, it’s not that critical who or what types the code. What matters is who or what reviews, judges, and accepts it. And how.

We could ask my favorite question: What does the endgame look like? And we have some potential reasons to worry, as we know that models fed upon their own outputs tend to collapse (to use the term researchers chose).

I won’t dig into these today, though. For the sake of this conversation, I can even flow with the hype. So let’s assume that AI indeed writes itself. If it can do something as sophisticated, it should be able to build your startup’s MVP as well, shouldn’t it?

The point is, it’s the wrong question altogether. Let’s look at some startups from quite a vast collection of those we worked with over two decades and see what we can learn.

Agriculture Startup

The basic idea was to monetize quite sophisticated data that the startup had access to. The product would serve as a reliable early warning of conditions that require farmers to make extra preparations.

Since a lack of said preparation means losing the entire crop, the stakes are high, making the monetization stream potentially lucrative.

Or so they thought. The early user interviews confirmed the basic condition. Yet it entirely challenged an ideated solution. The Total Addressable Market (TAM), which is a function of both user volume and Average Revenue Per User (ARPU), shrank significantly. Especially the revenue assumptions proved overly optimistic.

The reason why I like this story is that we invalidated assumptions before building anything. In fact, the moment we started developing the first prototype (with the big help of AI tools), it was a significantly different idea from what we started with.

Putting pre-development validation front and center was absolutely pivotal, as the main effort wasn’t creating a bunch of features but actually getting the earliest version in front of clients and gathering their feedback (a.k.a. learning). To make things spicier, the vegetation cycle creates a sadline for delivery and validation. The next opportunity window? Next year.

Building the wrong thing, even if you can do it cheaply, has costs that go well beyond the development effort.

Social Betting Startup

It’s a pre-AI story, but still relevant. The idea was to organize a social betting game around professional sports events. Two forces were in play initially.

League season is what it is, which creates uncontrollable time constraints. Sadlines again. However, we suggested pacing the development. It would extend the runway while enabling early end-to-end validation of the product idea. Of course, the price to pay was that the early version of the product would not be nearly as feature-rich as initially planned.

The founder decided to go fast. They wanted to have their vision turned into a working product before the start of the season. Higher pace and higher costs, but a sweet prize at the end, right?

Wrong. The whole thing fell apart late in the process because of legal constraints. To defend the founder somewhat, the legal ruling was not obvious, so it was more of a gray area than a blatant mistake. Still, that aspect being a risk should have been an argument for going slow rather than rushing.

If we started today with AI generating the code, the blocker would have been exactly the same. Going slow would still be advantageous.

Real Estate Startup

An idea to disrupt the real estate industry is not original. There are plenty of startups aiming to achieve just that. Yet doing that in a niche with some very specific (and non-obvious) constraints and characteristics, and building a product around them, has legs.

Unsurprisingly, our suggestion was to validate assumptions with throwaway prototypes (largely AI-generated) and limit the effort invested in an actual product. Luckily, the founder was receptive. Maybe not as in “entirely receptive,” but still.

The product idea had its technical challenges. However, they didn’t matter, as before we could even get there, we got blocked by a non-technical issue. Content availability, which was treated as given, was anything but.

That, in turn, triggered a pivot of an entire product. Which is a “Duh!” for a startup, sure enough. But it also rendered the original specs largely irrelevant. If we’d built those, it would have been wasted effort and money.

Again, it doesn't matter how quick you are if you're just painting yourself into a corner.

The Pace of Development Means Nothing If You’re Building the Wrong Thing

I could go on, but by now you probably get the theme. In all those cases, pushing product development (of the original idea) was a bad move. All that despite the fact that we went through a discovery phase in every single one of these cases. And if anything, we aspire to discourage (or at least challenge) founders during the discovery.

If you’re building the wrong thing, getting there faster just means you dig yourself a bigger hole.

The critical effort in product development is not getting something out there. It’s figuring out what the right thing is to get out there. And yes, building and deploying it is one way to figure it out. Not really an optimal one, though. Actually, I can hardly think of a less optimal path.

The inquiry path should always be:

Identify the key hypothesis to validate.

Figure out the most effective way to verify whether it’s true.

Execute.

Occasionally, building the thing may be the most effective way. At some point, it may be the only way. I mean, you can’t be 100% sure people would use the product unless there is a product to use. However, before we’re ready to close the very last gap, there’s typically plenty of better validation methods.

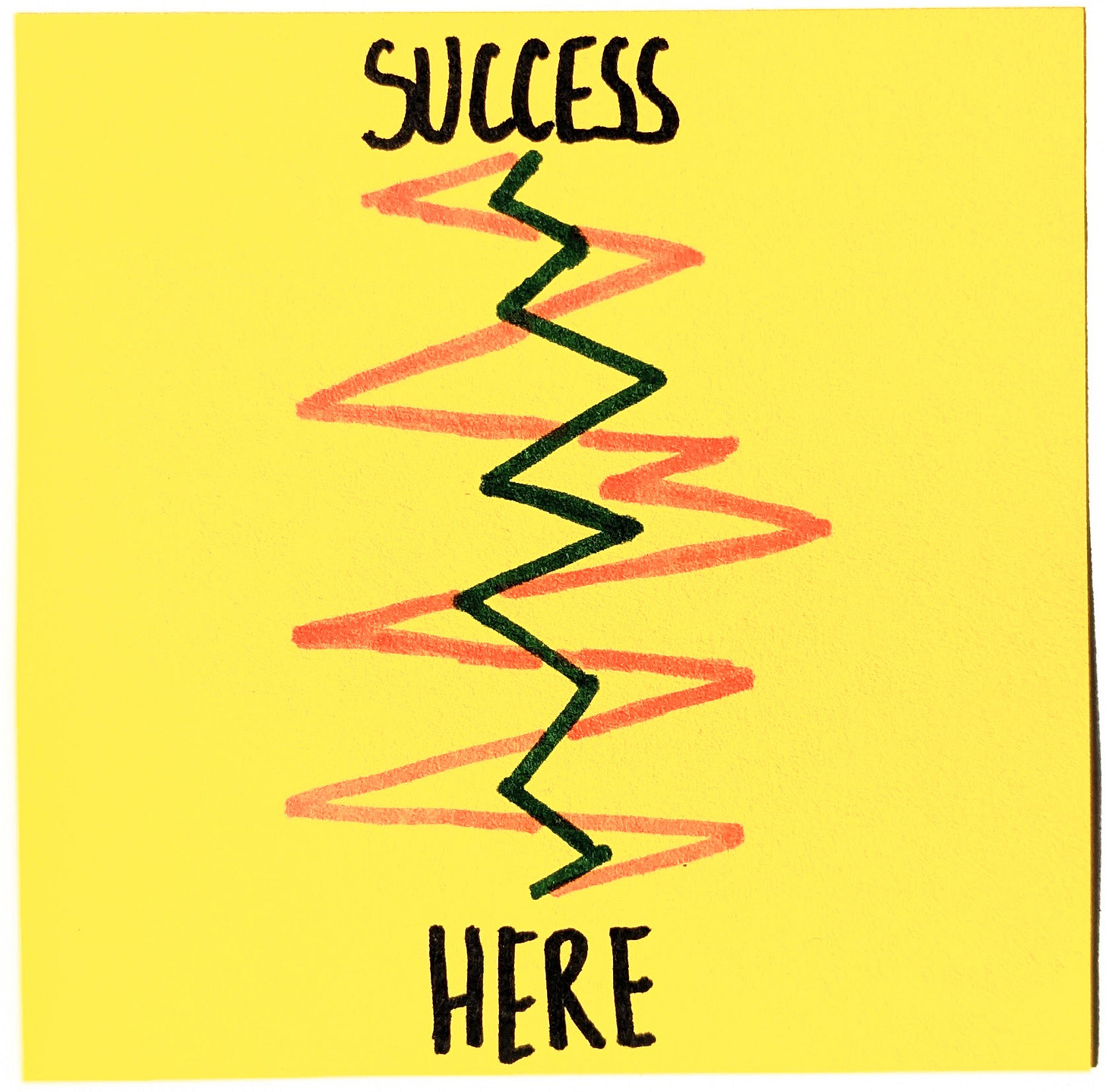

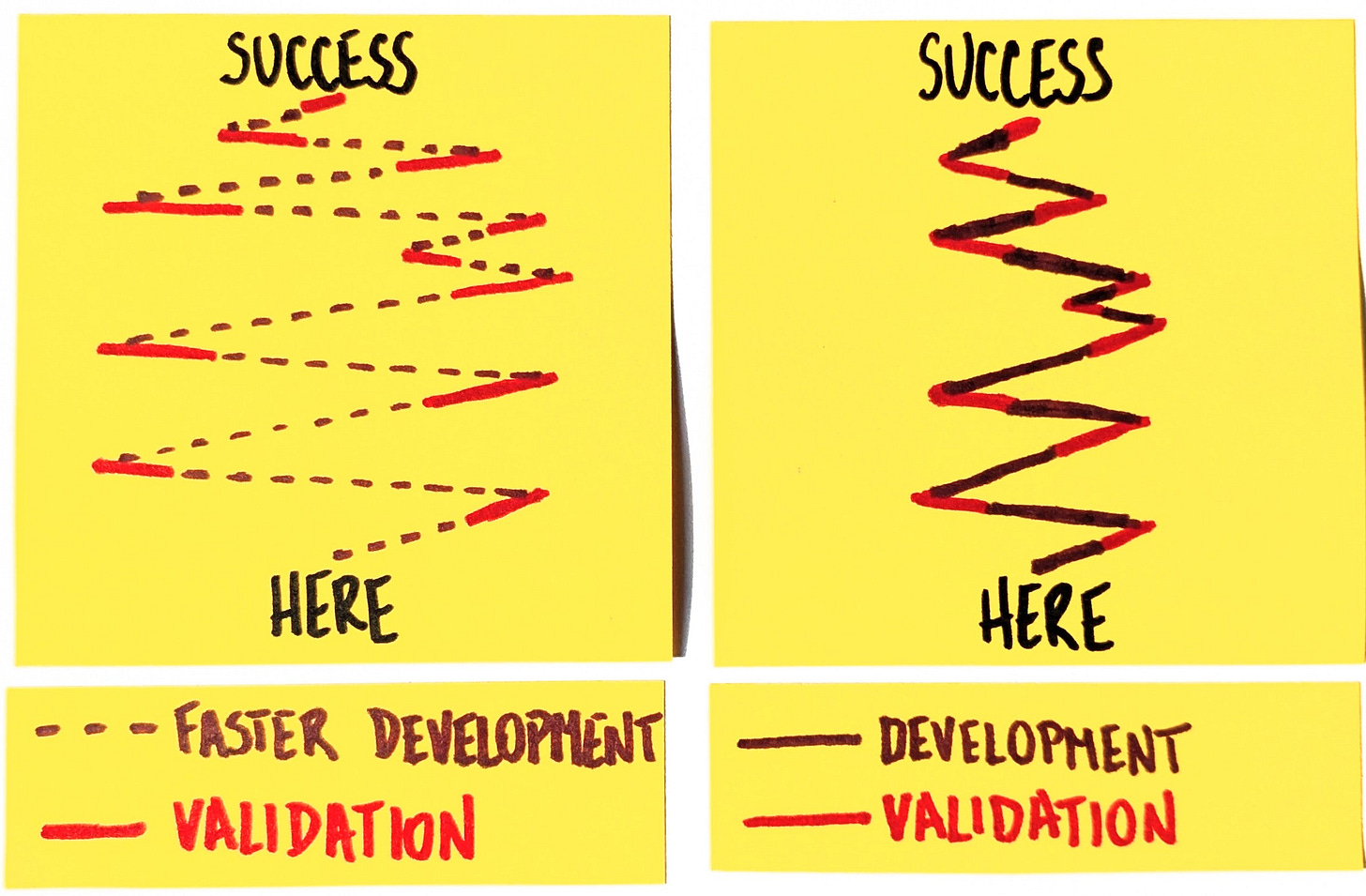

Zig-Zagging Amplitude Matters More than Pure Speed

One of our clients uses the metaphor of zig-zagging. He’s been in this business long enough to understand that there’s no straight path to success. As he puts it, there’s always zig-zagging.

If you look at it this way, an effective strategy to get “there” is not necessarily running faster. It’s optimizing for course corrections. In other words, limiting the amplitude of zig-zags.

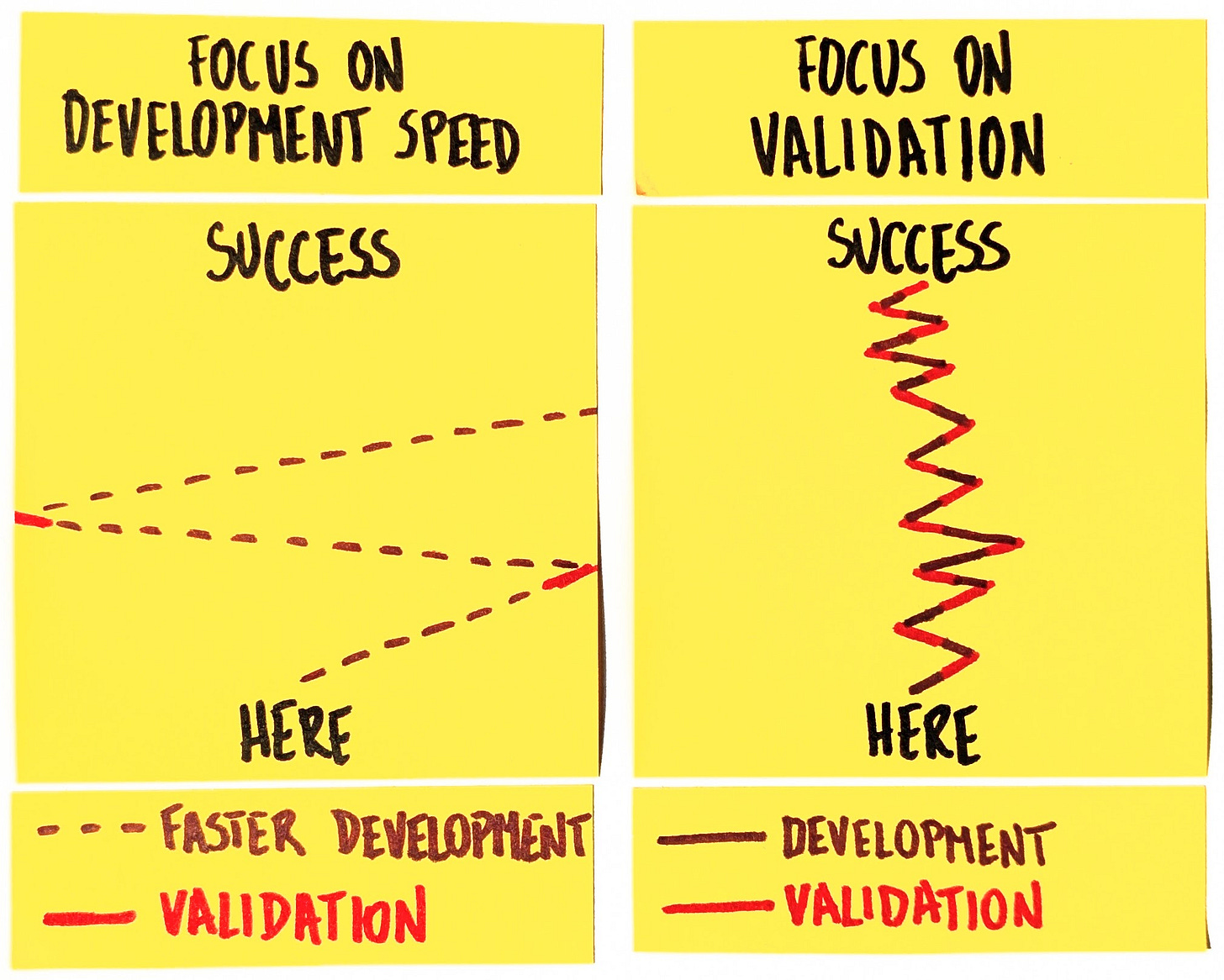

Now, the enthusiasm we see for AI largely centers on how fast we can go with developing new features. It’s not about the amplitude of zig-zags at all.

Granted, there’s an advantageous part. If we go faster, we reach each turn sooner than we would have. There’s a cost, too, though. Since we go faster, we’re bound to have more momentum in each swing. The zig-zags get wider still.

Validation Is the Bottleneck

There’s another part that we often miss. The speed-up effect is limited to only a part of the process. AI can indeed generate features fast. But that does not necessarily mean we get through the entire cycle equally rapidly.

And it’s the validation part that makes us adjust the course. Remove this, and we’d be going off the chart. And not in a desirable way.

If anything, the new setup inclines us to go further before evaluating and correcting. After all, building gets faster. Validation is just as slow as it was.

Now, it’s nothing new. Validation has been the bottleneck the whole time. We can get Claude Code to generate an MVP way more efficiently than we developed it 5 years ago. Heck, at this stage, we can even ignore concerns about the long-term sustainability of AI-generated codebases.

None of these will magically make startups succeed, though. If we are building the wrong thing—and, statistically speaking, we do—development pace doesn’t matter nearly as much as we’re told.

“There is nothing so useless as doing efficiently that which should not be done at all.”

Peter Drucker

This post has not been created with Claude Code.

웃https://okhuman.com/WuQPnA